New & Notable

How AI is transforming financial forecasting for SMBs and enterprises

Dan Wilson | July 15, 2025 at 2:57 pmTop Webinar

Recently Added

How AI is transforming financial forecasting for SMBs and enterprises

Dan Wilson | July 15, 2025 at 2:57 pmPerspectives from various industries. Financial forecasting has long been a cornerstone of strategic planning, essential...

Why good data doesn’t guarantee good decisions

Lenard Lim | July 14, 2025 at 5:25 pmIt’s easy to assume that more data—or cleaner dashboards—will automatically lead to better decisions. But after wo...

How to architect a scalable data pipeline for HealthTech applications

Gaurav Belani | July 9, 2025 at 10:00 amHealthTech runs on data. From patient vitals and lab results to insurance claims and wearable device streams, there’s ...

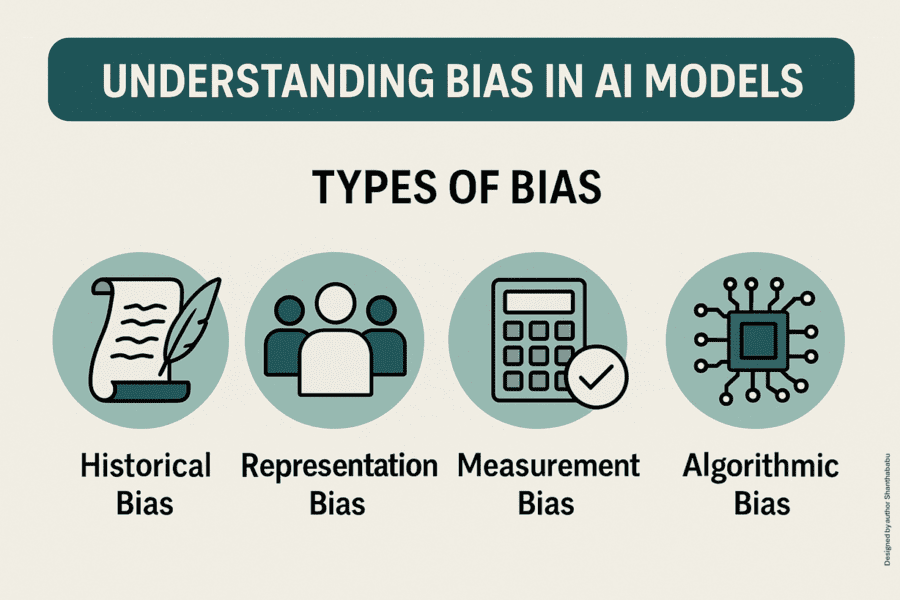

Ethics-driven model auditing and bias mitigation

Shanthababu Pandian | July 7, 2025 at 3:06 pmIntroduction Artificial intelligence (AI) and machine learning (ML) systems are becoming increasingly integral to decisi...

How agentic AI reshapes your development

Gaurav Belani | July 7, 2025 at 12:02 pmArtificial Intelligence has moved past its earlier, more passive role of assisting with data processing and predictions....

Utilize machine learning to improve employee retention rates

Zachary Amos | June 30, 2025 at 10:59 amEmployee turnover is one of the most pressing challenges modern businesses face. It drains resources, lowers morale and ...

The hidden cost of over-instrumentation: Why more tracking can hurt product teams

Lenard Lim | June 17, 2025 at 10:22 amStop tracking everything: Rethink your data strategy If you’ve ever opened a product analytics dashboard and scrolled ...

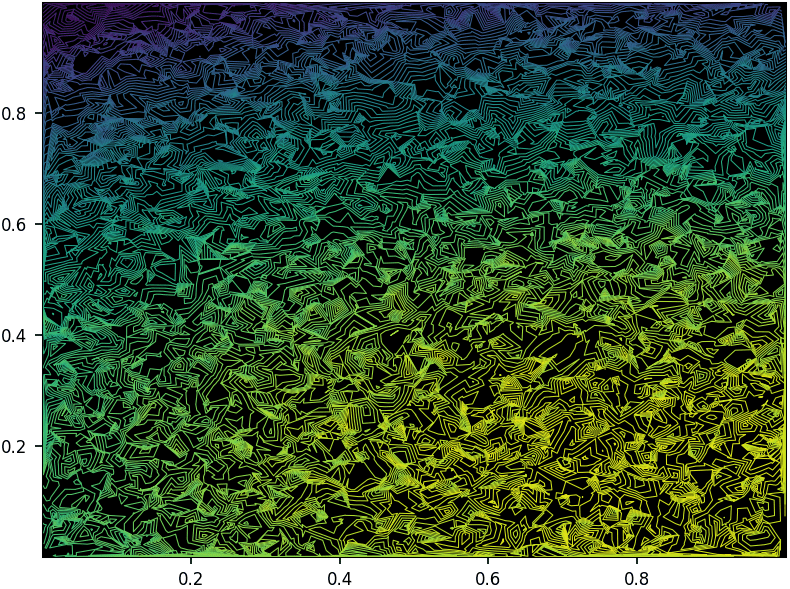

How to Build and Optimize High-Performance Deep Neural Networks from Scratch

Vincent Granville | June 13, 2025 at 5:06 pmWith explainable AI, intuitive parameters easy to fine-tune, versatile, robust, fast to train, without any library other...

Unlocking the value of AI with DevOps accelerated MLOps

Janne Saarela | June 11, 2025 at 3:27 pmSince the early 2000s, the advent of digital transformation has compelled businesses across industries to reimagine thei...

Revamping enterprise content management with language models

Jelani Harper | June 9, 2025 at 11:23 amThe relatively recent capacity for front-end users to interface with backend systems, documents, and other content via n...

New Videos

A/B Testing Pitfalls – Interview w/ Sumit Gupta @ Notion

Interview w/ Sumit Gupta – Business Intelligence Engineer at Notion In our latest episode of the AI Think Tank Podcast, I had the pleasure of sitting…

Davos World Economic Forum Annual Meeting Highlights 2025

Interview w/ Egle B. Thomas Each January, the serene snow-covered landscapes of Davos, Switzerland, transform into a global epicenter for dialogue on economics, technology, and…

A vision for the future of AI: Guest appearance on Think Future 1039

As someone who has spent years navigating the exciting and unpredictable currents of innovation, I recently had the privilege of joining Chris Kalaboukis on his show, Think Future.…